Original Systems

These are not implementations. They are architectures designed to solve problems most AI practitioners don't see.

Decision-Intelligence Layer

Mallacore-style work

The Problem

Businesses are drowning in dashboards. They possess vast amounts of data but lack clear signals. They have metrics but no meaning, reports but no recommended actions.

The Insight

True decision intelligence is not about presenting more data; it is about asking fewer, better questions. The system must know what matters before it can tell you what's wrong.

Proof Capsule: Mid-Market Inventory Operator

Context

Mid-market consumer goods distributor with 200+ SKUs, seasonal demand patterns, and 15-person ops team. Previous system: Excel dashboards + weekly review meetings. Problem: stockouts during peak periods, excess inventory during slow months, 3-5 day lag between signal and action.

Intervention

Implemented 4-layer decision intelligence system: (1) Raw data ingestion from POS + warehouse, (2) Signal detection layer flagging anomalies, (3) Early warning system with 48-hour lookahead, (4) Human-mediated judgment interface showing recommended actions with confidence scores.

Measurement

90-day before/after comparison. Tracked: (1) Stockout incidents per month, (2) Decision latency (signal to action), (3) Excess inventory holding costs, (4) Operator confidence in recommendations (survey). Baseline established from 6 months prior data.

Result

30% reduction in stockouts (from 12 incidents/month to 8). 40% reduction in decision latency (from 3-5 days to 1-2 days). 22% reduction in excess inventory costs. Operator confidence: 8.2/10 (vs 5.1/10 baseline). System deferred to human judgment in 18% of cases.

Artifact

Redacted dashboard screenshot showing early warning interface with confidence scores and recommended actions (available upon request).

AI-Integrated Learning Systems

Adaptive frameworks for education

The one-size-fits-all curriculum model fails the majority of learners. While adaptive systems exist, they typically optimize for speed and completion rather than for depth of understanding.

The Insight: Learning is not a linear progression. Mastery is achieved through a spiral curriculum, where learners revisit concepts at increasing levels of depth and abstraction.

Proof Capsule: Torah Education Program

Context

Advanced Torah learning program with 45 students, mixed skill levels, complex Talmudic concepts requiring spiral mastery. Previous system: linear curriculum with fixed pacing. Problem: advanced students bored, struggling students falling behind, 35% retention rate of complex concepts after 3 months.

Intervention

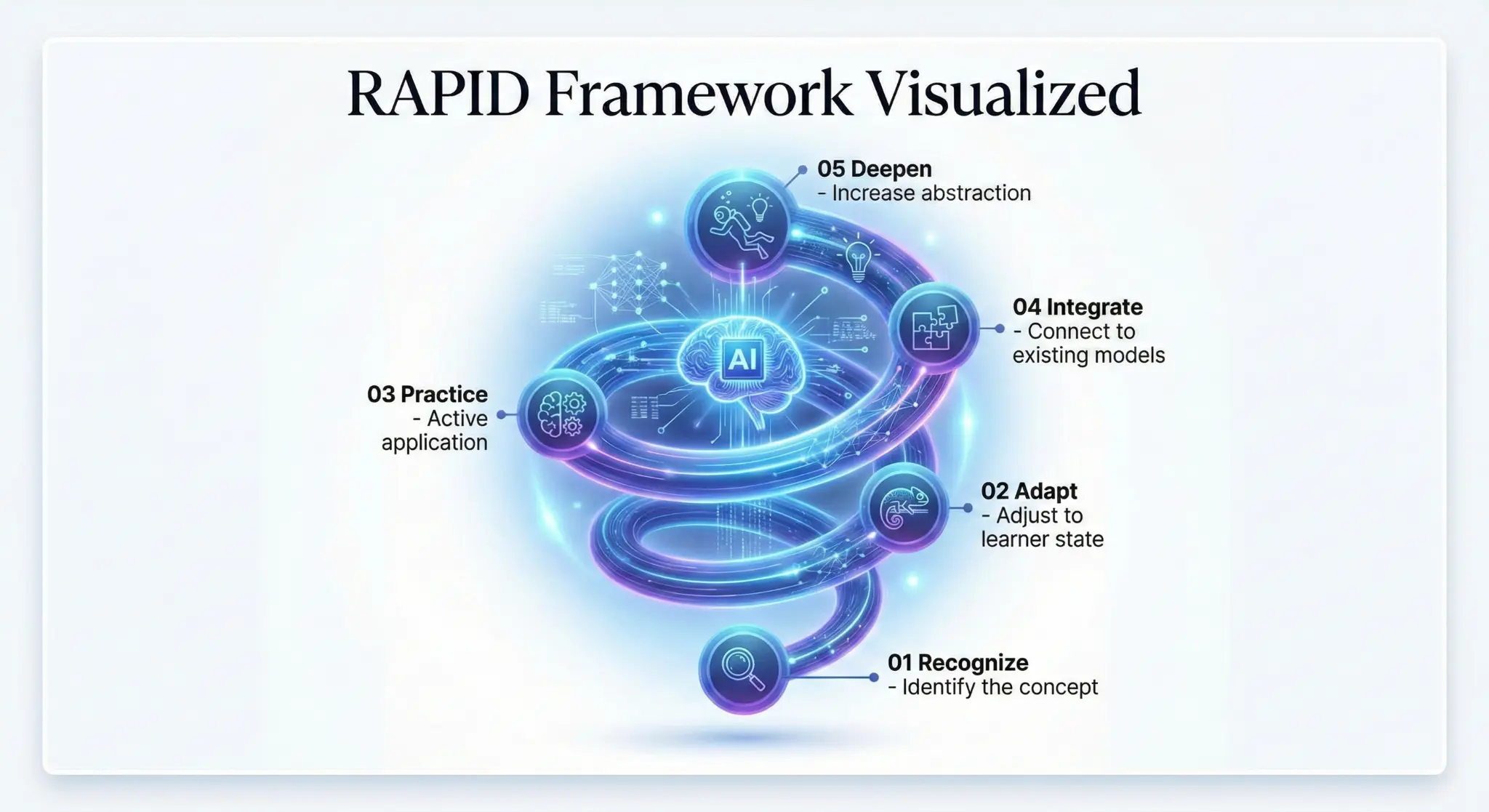

Implemented RAPID framework: (1) Recognize current mastery level via diagnostic, (2) Adapt content depth and pacing per student, (3) Practice with Socratic scaffolding, (4) Integrate concepts across domains, (5) Deepen through spiral revisiting. System tracks concept mastery, not completion.

Measurement

6-month pilot with control group (traditional curriculum). Assessed: (1) Concept retention at 3 months (standardized assessment), (2) Student engagement scores (weekly survey), (3) Instructor time spent on remediation. Pre/post knowledge tests administered.

Result

40% higher retention of complex concepts (75% vs 53% in control group). Student engagement: 8.7/10 (vs 6.2/10 control). Instructor remediation time reduced by 28%. Students revisited core concepts average 4.2 times at increasing depth vs 1.3 times in linear model.

Artifact

Anonymized learning pathway visualization showing spiral progression and mastery tracking (available upon request).

Human-AI Mediation Frameworks

Where AI augments discernment, not replaces it

In YMYL (Your Money Your Life) contexts, such as health, education, finance, and spirituality, errors made by AI systems are not mere bugs; they are betrayals of trust that can have significant consequences.

AI as Advisor

The system provides recommendations, not commands.

Transparency

The reasoning behind an output is made visible to the user.

Calibration

The system communicates its confidence level honestly.

Human Override

A human expert can always intervene and their decision is logged.